Highlights

|

References

|

The dataset, eye movement prediction models and metrics (both spatial and sequential), and related experiments, are described in:

The license agreement for data usage implies the citation of the paper above. Please notice that citing the dataset URL instead of the publication would not be compliant with this license agreement. |

VOC Actions 2012

|

This dataset is one of the largest and most challenging available for real world actions in static images. It contains 10 classes: jumping, phoning, playing instrument, reading, riding bike, riding horse, running, taking photo, using computer, walking. It consists of 9157 images and is split into a training set of 2296 images, a validation set of 2292 images and a test set of 4569 images. |

Eyetracking Setup and Geometry

|

Eye movements were recorded using an SMI iView X HiSpeed 1250 tower-mounted eye tracker, with a sampling frequency of 500Hz. The head of the subject was placed on a chin-rest located at 60cm from the display. Viewing conditions were binocular and gaze data was collected from the dominant eye of the participant. The LCD display had a resolution 1280 x 1024 pixels, with a physical screen size of 47.5 x 29.5cm. Because the resolution varies across the dataset, each image was rescaled to fit the screen, preserving the original aspect ratio. The visual angles subtended by the stimuli ranged from 12.65 to 43.19 degrees in the horizontal plane and from 8.30 to 27.62 degrees in the vertical plane. |

|

|

Calibration and Validation Procedures

|

The calibration procedure was carried out at the beginning of each block. The subject had to follow a target that was placed sequentially at 13 locations evenly distributed across the screen. Accuracy of the calibration was then validated at 4 of these calibrated locations. If the error in the estimated position was greater than 0.75 degrees of visual angle, the experiment was stopped and calibration restarted. At the end of each block, validation was carried out again, to account for fluctuations in the recording environment. If the validation error exceeded 0.75 degrees of visual angle, the data acquired during the block was deemed noisy and discarded from further analysis. Following this procedure, 0.38% of the data had to be discarded. |

|

|

Subjects

|

We have collected data from 12 human volunteers (5 male and 7 female) aged between 22 and 46. We split them into a group which had to solve an action recognition task (8 subjects), and a group that was asked to perform context recognition (4 subjects). None of our subjects was a cognitive scientist. |

Recording Protocol

|

Before each image was shown, participants in the action recognition group were required to fixate the center of the screen. Display would proceed automatically using the trigger area-of-interest feature provided by the iView X software. |

|

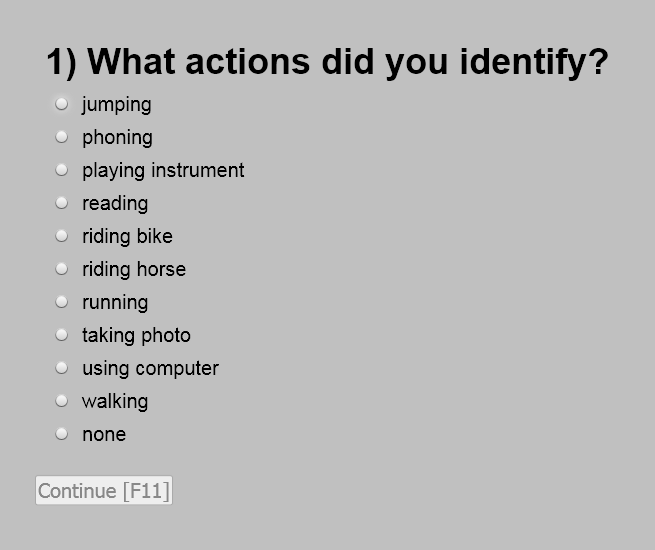

Subjects in the action recognition group were asked to recognize the actions in the image and indicate them from the labels provided by the Pascal VOC dataset. Subjects in the context recognition group were asked to find which of 8 contextual elements occur in the background of each image. The contextual elements were: furniture, painting/wallpaper, body of water, building, car/truck, mountain/hill, road, tree. The subjects' multiple choice answers were recorded through a set of check-boxes displayed at the end of each image exposure, which the subject manipulated using a mouse. Exposure times were fixed within each task (3 seconds for the action recognition group and 2.5 seconds for the context recognition group). |

|

|

Acknowledgements

|

This work was supported by a grant of the Romanian National Authority for Scientific Research, CNCS - UEFISCDI, under CT-ERC-2012-1. |